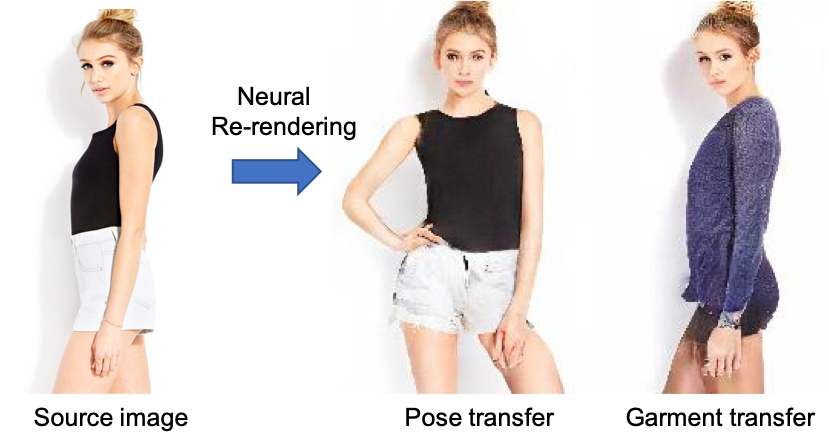

Neural Re-Rendering of Humans from a Single Image

Abstract

Human re-rendering from a single image is a starkly under-constrained problem, and state-of-the-art algorithms often exhibit undesired artefacts, such as over-smoothing, unrealistic distortions of the body parts and garments, or implausible changes of the texture. To address these challenges, we propose a new method for neural re-rendering of a human under a novel user-defined pose and viewpoint, given one input image. Our algorithm represents body pose and shape as a parametric mesh which can be reconstructed from a single image and easily reposed. Instead of a colour-based UV texture map, our approach further employs a learned high-dimensional UV feature map to encode appearance. This rich implicit representation captures detailed appearance variation across poses, viewpoints, person identities and clothing styles better than learned colour texture maps. The body model with the rendered feature maps is fed through a neural image-translation network that creates the final rendered colour image. The above components are combined in an end-to-end-trained neural network architecture that takes as input a source person image, and images of the parametric body model in the source pose and desired target pose. Experimental evaluation demonstrates that our approach produces higher quality single image re-rendering results than existing methods.

Results

We have used the train/test pairs of DeepFashion dataset that was also used in the existing works such as PoseGAN, DPT, CBI, etc. Specifically, our training and testing pairs were generated from the publically available code of PoseGAN. In this page, we provide our results for the 176 testing pairs (a subset of the full testing pairs) that was used in the paper for quantitative results. Please find our results in the Downloads section.

Downloads

Citation

@inproceedings{Sarkar2020,

author = {Sarkar, Kripasindhu and Mehta, Dushyant and Xu, Weipeng and Golyanik, Vladislav and Theobalt, Christian},

title = {Neural Re-Rendering of Humans from a Single Image},

booktitle = {European Conference on Computer Vision (ECCV)},

year = {2020}

} Acknowledgments

This work was supported by the ERC Consolidator Grant 4DReply (770784).

Contact

For questions and clarifications please get in touch with:Kripasindhu Ksarkar ksarkar@mpi-inf.mpg.de

Vladislav Golyanik golyanik@mpi-inf.mpg.de