SIGGRAPH Asia 2015 |

||

Generalizing Wave Gestures from Sparse Examples for Real-time Character Control |

| Helge Rhodin1 | James Tompkin2 | Kwang In Kim3 | Edilson de Aguiar4 | Hanspeter Pfister2 | Hans-Peter Seidel1 | Christian Theobalt1 |

| 1MPI für Informatik | 2Harvard Paulson SEAS | 3Lancaster University | 4Federal University of Espirito Santo |

|

| Abstract | |

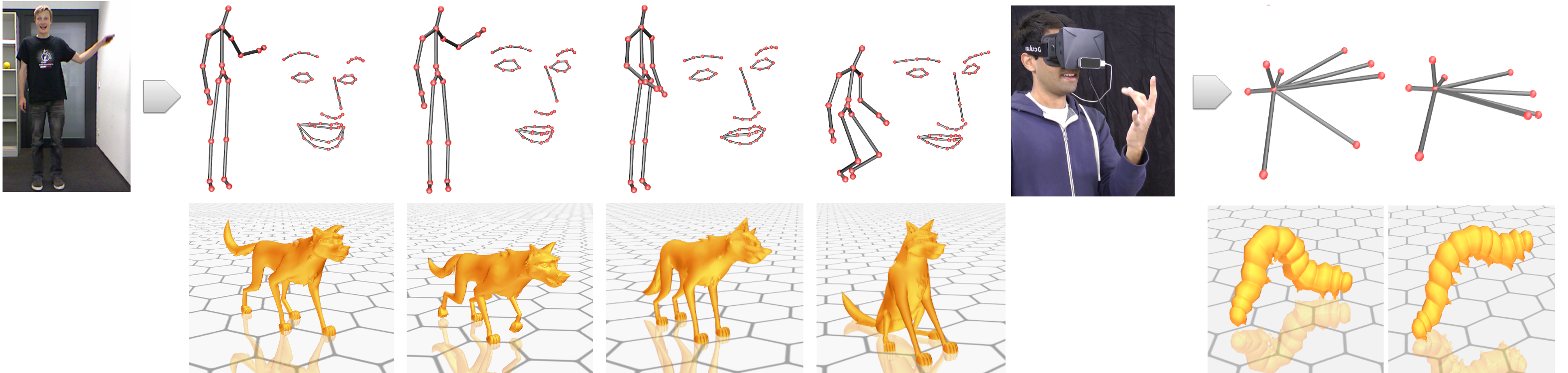

Motion-tracked real-time character control is important for games and VR, but current solutions are limited: retargeting is hard for non-human characters, with locomotion bound to the sensing volume; and pose mappings are ambiguous and not robust with consumer trackers, with dynamic motion properties unwieldy. We robustly estimate wave properties — amplitude, frequency, and phase — for a set of interactively-defined gestures, by mapping user motions to a low-dimensional independent representation. The mapping both separates simultaneous or intersecting gestures, and extrapolates gesture variations from single training examples. For animation control, e.g., locomotion, wave properties map naturally to stride length, step frequency, and progression, and allow smooth animation from standing, to walking, to running. Simultaneous gestures are disambiguated successfully. Interpolating out-of-phase locomotions is hard, e.g., quadruped legs between walks and runs, so we introduce a new time-interpolation scheme to reduce artifacts. These improvements to real-time motion-tracked character control are particularly important for common cyclic animations, which we validate in a user study, with versatility to apply to part and full body motions across a variety of sensors.

|

Files |

|

|

|

|||

|

Paper PDF (9 MB) |

Supplemental Material PDF (4 MB) |

Presentation PPTX (150 MB) |

|

Supplemental Video MP4 (120 MB) |

Extra Comparisons MP4 (60 MB) |

| Acknowledgements | |

We thank Gabi Kussani, our professional Hohnsteiner puppeteer, our

animators Gottfried Mentor and Cynthia Collins,

Hung Vodinh and Joel Anderson for the horse character,

Pakie Seung for the dog character,

Harry Gladwin-Geoghegann for the dinosaur character,

Yeongho Seol for his correspondence and his dinosaur animation, Gregorio Palmas and

Hendrik Strobert for visualization help, and Michael Neff, Takaaki

Shiratori, Kiran Varanasi, Simon Pilgrim, and all reviewers for their

valuable discussion and feedback.

This research was partially funded

by the ERC Starting Grant project CapReal (335545). Kwang In

Kim thanks EPSRC EP/M00533X/1. James Tompkin and Hanspeter

Pfister thank NSF CGV-1110955.

|