Non-Rigid Neural Radiance Fields:

Reconstruction and Novel View Synthesis of a

Dynamic Scene From Monocular Video

Abstract

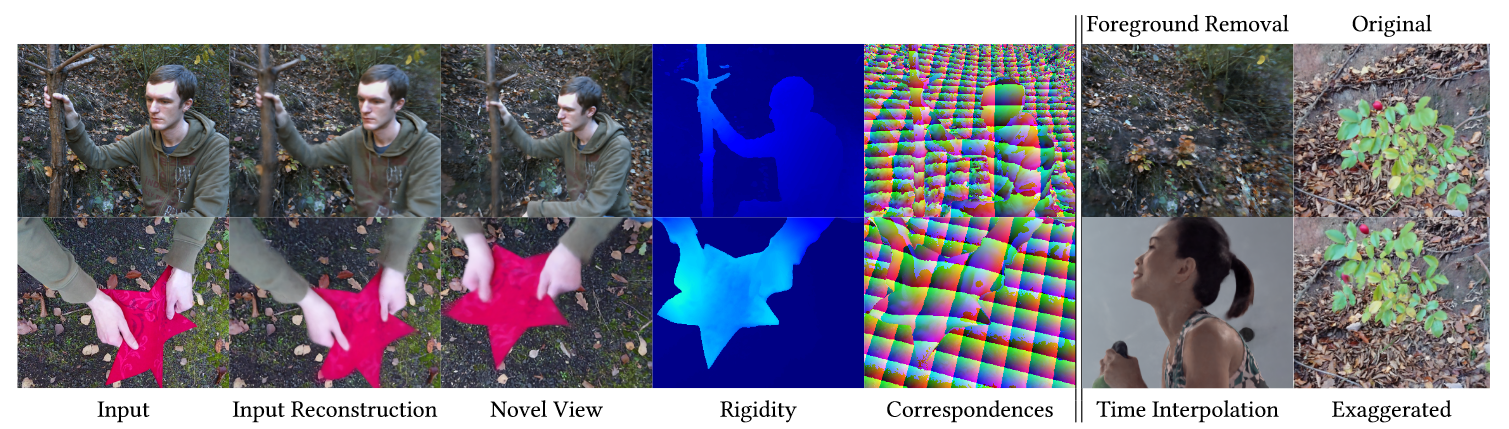

We present Non-Rigid Neural Radiance Fields (NR-NeRF), a reconstruction and novel view synthesis approach for general non-rigid dynamic scenes. Our approach takes RGB images of a dynamic scene as input (e.g., from a monocular video recording), and creates a high-quality space-time geometry and appearance representation. We show that a single handheld consumer-grade camera is sufficient to synthesize sophisticated renderings of a dynamic scene from novel virtual camera views, e.g. a `bullet-time' video effect. NR-NeRF disentangles the dynamic scene into a canonical volume and its deformation. Scene deformation is implemented as ray bending, where straight rays are deformed non-rigidly. We also propose a novel rigidity network to better constrain rigid regions of the scene, leading to more stable results. The ray bending and rigidity network are trained without explicit supervision. Our formulation enables dense correspondence estimation across views and time, and compelling video editing applications such as motion exaggeration. Our code will be open sourced.

Supplemental Video (450 MB)

VCAI Neural Rendering technology applied to The Matrix 4 movie.

The following shows results of an exciting experiment we did with our friends from Volucap in Berlin who did the special effects for the movie Matrix Resurrections. Input to the result above was a multi-view video sequence filmed with a seven camera rig. We then applied our NR-NerF algorithm to it to use neural rendering for creating a virtual bullet time camera path through the scene. Also, we could extract structure and motion information from the scene. The shot was not used like this in the movie, but the result shows the exciting potential of our neural rendering techniques also in cutting-edge movie productions. See also https://volucap.com/portfolio-items/the-matrix-resurrections/Downloads

Citation

@inproceedings{tretschk2021nonrigid,

title = {Non-Rigid Neural Radiance Fields: Reconstruction and Novel View Synthesis of a Dynamic Scene From Monocular Video},

author = {Tretschk, Edgar and Tewari, Ayush and Golyanik, Vladislav and Zollh\"{o}fer, Michael and Lassner, Christoph and Theobalt, Christian},

booktitle = {{IEEE} International Conference on Computer Vision ({ICCV})},

organization = {{IEEE}},

year = {2021},

}

Acknowledgments

All data capture and evaluation was done at MPII and Volucap. Research conducted by Ayush Tewari, Vladislav Golyanik and Christian Theobalt at MPII was supported in part by the ERC Consolidator Grant 4DReply (770784). This work was also supported by a Facebook Reality Labs research grant. We thank Volucap for providing the multi-view data.

Contact

For questions, clarifications, please get in touch with:Edgar Tretschk tretschk@mpi-inf.mpg.de