Monocular 3D Human Pose Estimation In The Wild Using Improved CNN Supervision

Abstract

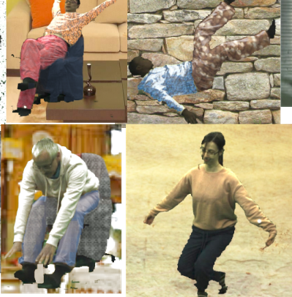

We propose a CNN-based approach for 3D human body pose estimation from single RGB images that addresses the issue of limited generalizability of models trained solely on the starkly limited publicly available 3D pose data. Using only the existing 3D pose data and 2D pose data, we show state-of-the-art performances on established benchmarks through transfer of learned features, while also generalizing to in-the-wild scenes. We further introduce a new training set for human body pose estimation from monocular images of real humans that has the ground truth captured with a multi-camera marker-less motion capture system. It complements existing corpora with greater diversity in pose, human appearance, clothing, occlusion, and viewpoints, and enables an increased scope of augmentation. We also contribute a new benchmark that covers outdoor and indoor scenes, and demonstrate that our 3D pose dataset shows better in-the-wild performance than existing annotated data, which is further improved in conjunction with transfer learning from 2D pose data. All in all, we argue that the use of transfer learning of representations in tandem with algorithmic and data contributions is crucial for general 3D body pose estimation.

Downloads

Citation

@inproceedings{mono-3dhp2017,

author = {Mehta, Dushyant and Rhodin, Helge and Casas, Dan and Fua, Pascal and Sotnychenko, Oleksandr and Xu, Weipeng and Theobalt, Christian},

title = {Monocular 3D Human Pose Estimation In The Wild Using Improved CNN Supervision},

booktitle = {3D Vision (3DV), 2017 Fifth International Conference on},

url = {http://gvv.mpi-inf.mpg.de/3dhp_dataset},

year = {2017},

organization={IEEE},

doi={10.1109/3dv.2017.00064},

}

Acknowledgments

This work is was funded by the ERC Starting Grant project CapReal (335545). Dan Casas was supported by a Marie Curie Individual Fellow, grant agreement 707326, and Helge Rhodin by the Microsoft Research Swiss JRC. We also thank Foundry for license support.

Contact

For questions and clarifications please get in touch with:Dushyant Mehta

dmehta@mpi-inf.mpg.de